The Threat Landscape in 2026

In 2023, Samsung engineers accidentally leaked internal source code and meeting notes to ChatGPT. The data was ingested and potentially used for model training. Samsung responded by banning ChatGPT company-wide.

That was three years ago, and the risks have only multiplied. With agentic AI systems that can autonomously browse the web, call APIs, and execute code, the blast radius of a compromised prompt is orders of magnitude larger.

OWASP lists prompt injection as the #1 LLM vulnerability. In agentic systems, a single injected prompt in a document processed by your AI could trigger a cascade of autonomous actions — data retrieval, API calls, email sends, file modifications — all without a human in the loop.

Without an AI gateway, none of these attack vectors have a detection layer. Every direct LLM call is an unmonitored entry point.

Data Leakage — The Most Common Risk

Every time someone on your team uses an LLM, they're sending data. The question is: what data, and where does it go?

- Accidental PII exposure — an analyst pastes a customer export into ChatGPT. Names, emails, and transactions are now in a third-party system.

- Credentials in prompts — a developer pastes a debugging script that includes API keys or database credentials.

- Internal IP and strategy — a product manager includes internal roadmap information in AI context.

- Synthetic data reconstruction — modern LLMs can reconstruct memorised training data under certain prompting conditions.

How a Gateway Eliminates These Risks

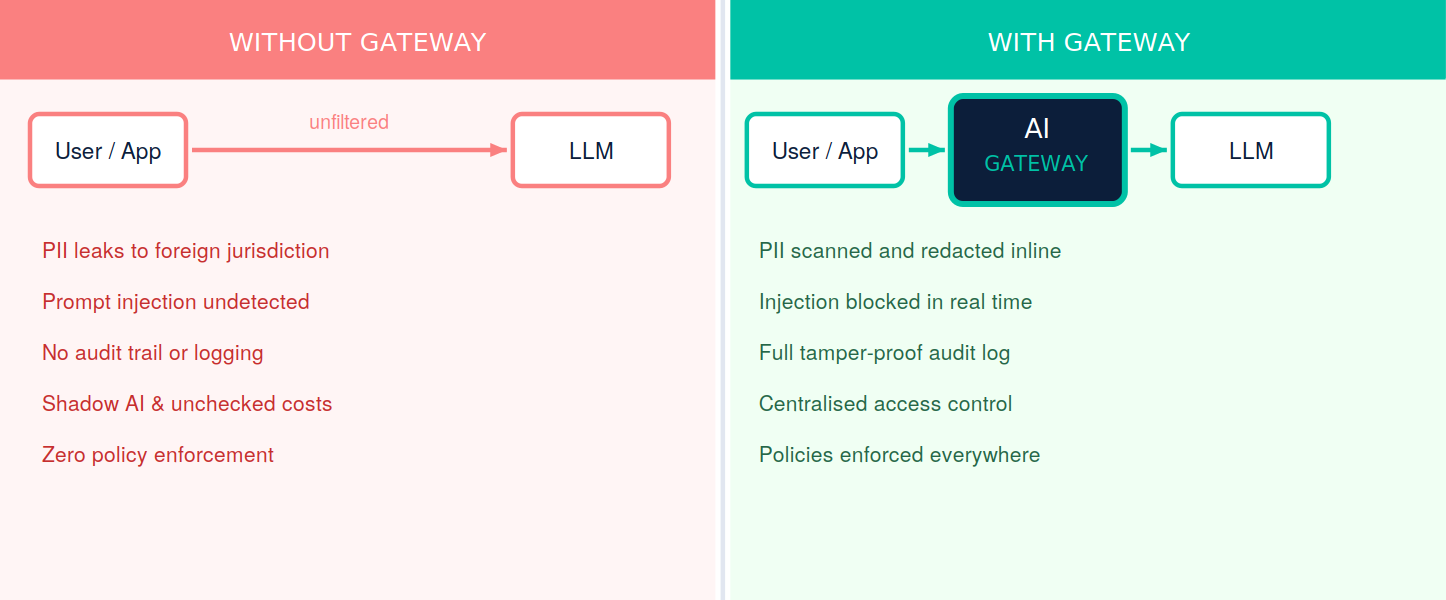

The diagram below contrasts the two paths: direct LLM access on the left, gateway-mediated access on the right. The difference between the two is the difference between unmanaged risk and governed infrastructure.

- Prompt injection mitigation — real-time scanning of all inputs and outputs for injection patterns.

- DLP and PII detection — every prompt scanned using regex, NER, and AI classifiers. Sensitive content automatically redacted or blocked.

- Authentication and access control — centralised key management eliminates shadow API keys.

- Audit trails — every interaction logged with full context. Tamper-resistant and exportable.

- Agentic guardrails — every step in an autonomous workflow validated against policy before execution.

Worried about what your team is sending to LLMs right now?

Talk to Cloud Shuttle about a fast AI governance assessment.

RELATED_NODES

NODE_CHAIN // SIG_FAST

CloudShuttle Insights